Ethical Considerations in AI-Driven Learning: Navigating Challenges and Protecting Student Rights

The rise of artificial intelligence (AI) in education brings transformative potential to classrooms worldwide. While AI-driven learning can personalize education and boost efficiency, it also raises critical ethical concerns. In this article, we’ll explore the ethical considerations in AI-driven learning, examine the challenges these systems present, and provide actionable steps for protecting student rights.

Introduction: AI in Modern Education

AI-driven learning platforms are revolutionizing the education sector. From adaptive testing systems to personalized learning algorithms, artificial intelligence shapes how content is delivered, assessed, and experienced by students. However, as AI permeates more educational processes—from lesson planning to student evaluation—the importance of ethical considerations in AI-powered education becomes paramount.

This guide uncovers the principal ethical challenges, their impact on students, and best practices for educators, parents, and EdTech developers to safeguard student rights in the age of AI.

Why Ethical Considerations Matter in AI-Driven Learning

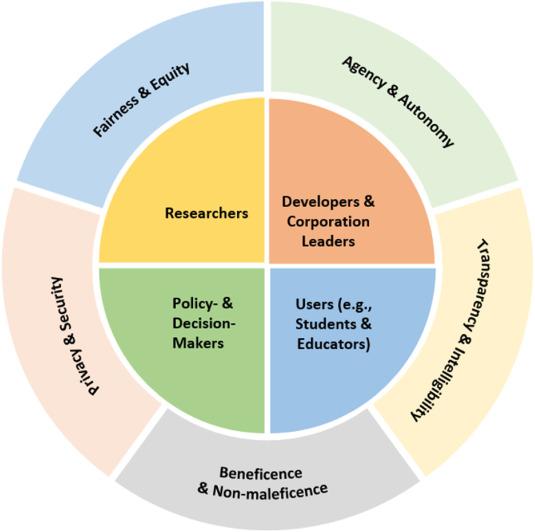

- Safeguarding student autonomy: Students must have control over their data and learning trajectory.

- Ensuring fairness: AI systems should not introduce or perpetuate biases in educational opportunities.

- Protecting privacy: Student data used to train AI models must be handled with the highest standards of security and confidentiality.

- Openness and accountability: Stakeholders must understand how AI-driven decisions are made, and have recourse when errors occur.

Principal Ethical Challenges in AI-Powered Learning

1. Data Privacy and Security

AI-powered education platforms often require vast amounts of personal data. this includes behavioral data, academic records, and sometimes even biometric data. The ethical considerations surrounding student data privacy are significant:

- Who owns the student data?

- How is it being used and secured?

- Can students (and parents) opt out of data collection?

The risk of data breaches or misuse highlights the urgent need for robust cybersecurity protocols and explicit, informed consent procedures.

2. Algorithmic Bias and fairness

AI learning algorithms can inadvertently reinforce social biases present in the datasets they’re trained on. Such as, if a predictive system is used to allocate resources or recommend programs, it must avoid disadvantaging certain groups—especially those historically marginalized.

- Unequal representation in training data can lead to biased outcomes.

- Lack of regular audits may allow discriminatory patterns to persist.

3. Transparency and Explainability

it’s imperative that educational stakeholders understand how AI-driven decisions are made. Without transparency,students and educators may not trust,or be able to challenge,an AI’s proposal,grade,or feedback.

- Are AI decisions explainable in plain language?

- Is there a clear appeals process when errors occur?

4. Consent and Student autonomy

Children and teenagers are notably vulnerable in digital spaces. Ensuring meaningful, age-appropriate consent and the ability to opt out of AI-driven activities are foundational ethical expectations.

- Are students (and their guardians) fully informed about AI usage?

- Can students control how AI affects their learning journey?

Benefits of ethically Designed AI-driven learning Systems

Despite the ethical challenges, AI-powered education offers tangible benefits when designed responsibly:

- Personalized Learning: Students receive tailored content and pacing, resulting in improved engagement and outcomes.

- Early Intervention: AI can flag students at risk, allowing educators to provide timely support.

- Inclusivity: Adaptive tools help bridge learning gaps for students of varying abilities and backgrounds.

- Administrative Efficiency: Educators can automate routine tasks, freeing time for direct student interaction.

Navigating the Challenges: Protecting Student Rights in AI-Powered Education

1. Implementing Robust Data Protection protocols

- Adopt encryption and secure storage for all student records.

- Regularly audit systems for vulnerabilities and data leaks.

- minimize data collection—gather only what’s essential for learning goals.

2. Ensuring algorithmic Fairness

- Use diverse and representative datasets for AI training.

- Conduct impartial, third-party audits to uncover biases.

- Continuously update algorithms to address new forms of bias.

3. Increasing Transparency

- Clearly communicate how AI impacts learning and decision-making.

- Provide stakeholders access to decision-making logic in understandable formats.

- enable a formal process for rectifying AI-driven errors.

4. Involving Stakeholders in the Development Process

- Invite teachers, students, and parents to participate in AI tool design and policy-making.

- Use pilot programs and feedback sessions to improve ethical compliance.

Case Studies: Ethical Considerations in Action

Case Study 1: Bias in College Admissions Algorithms

In 2020, a university’s AI-powered admissions tool was found to disadvantage applicants from certain socioeconomic backgrounds. After students and advocacy groups raised concerns, the school revised its algorithm, engaged independent auditors, and increased transparency in its admissions process. This case highlights the importance of algorithmic fairness and stakeholder involvement in AI in education.

Case Study 2: Protecting Student Privacy in K-12 Schools

an EdTech provider partnered with a public school district to implement an AI-driven personalized learning system. Prior to launch, they conducted a thorough privacy impact assessment, minimized data collection, and established strict parental consent protocols.Their proactive approach ensured ethical compliance while providing the benefits of AI-powered learning.

Practical Tips for Educators and Institutions

- Stay informed: Keep up-to-date with AI ethics frameworks and privacy laws such as GDPR and COPPA.

- Build a data stewardship culture: Appoint data protection officers and provide ongoing ethics training for staff.

- involve your community: Hold regular forums to discuss experiences and concerns with AI-driven tools.

- Monitor and evaluate: Continuously track AI impacts and adjust policies as needed.

First-Hand Experience: Navigating AI Ethics in the classroom

Maria Gomez, a high school science teacher in California, shares her approach:

“When our district introduced an AI-powered homework platform, I made transparency a priority with my students. We discussed what data would be collected, how the system suggested feedback, and how students could raise concerns about algorithmic errors.It sparked thoughtful conversations about digital rights and empowered students to take charge of their learning experience.”

Conclusion: Striking the Right Balance in AI-Driven Learning

As AI continues to reshape classrooms, prioritizing ethical considerations in AI-driven learning is not just a technical necessity—it’s a moral imperative. By proactively addressing challenges like data privacy, algorithmic bias, and consent, educators and technology providers can harness the benefits of AI-powered learning while respecting and protecting student rights.

Success requires ongoing dialog, stakeholder involvement, and a strong commitment to transparency and fairness. As educational technology advances, so too must our dedication to nurturing an ethical, inclusive, and student-centric digital learning surroundings.