Ethical considerations in AI-Driven Learning: navigating Challenges and Responsible Practices

Introduction

Artificial Intelligence (AI) is revolutionizing education. From adaptive learning platforms to intelligent tutoring systems, AI-driven learning tools promise personalized experiences, improved outcomes, and scalable solutions. However, with these technological advancements come significant ethical considerations in AI-driven learning. how can educators, developers, and policymakers ensure that innovative educational technologies remain respectful of students’ rights, unbiased, and transparent? In this thorough guide, we’ll examine the core challenges of AI in education, highlight responsible practices, and offer actionable tips for stakeholders navigating this rapidly evolving landscape.

Understanding AI-Driven Learning in Education

AI-driven learning refers to the application of artificial intelligence technologies in educational settings. These systems can adapt to individual student needs, provide instant feedback, automate grading, and even predict at-risk learners.Popular examples include recommendation systems on online learning platforms and AI-powered chatbots supporting student queries.

- Personalization: Customizes learning paths for each student.

- Automation: Streamlines administrative tasks and grading.

- Data-Driven Insights: Analyzes performance data for targeted interventions.

While these capabilities can greatly enhance teaching and learning, they also introduce complex ethical issues requiring careful consideration.

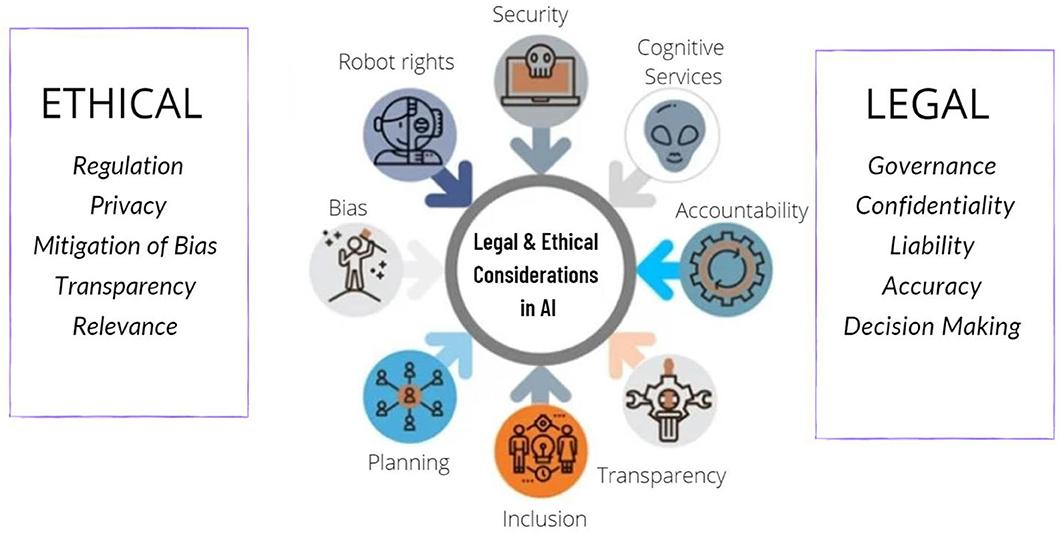

Key Ethical Considerations in AI-Driven Learning

AI in education comes with unique ethical dilemmas. The following issues are crucial for safe, fair, and responsible deployment of AI-powered educational tools:

1. Data Privacy and Security

- Student Data Protection: AI systems require large amounts of personal data to function effectively. Protecting student privacy and preventing misuse or unauthorized access is paramount.

- Compliance: Adhering to regulations like GDPR and FERPA is essential for ethical AI deployment in educational settings.

2. Algorithmic Bias and Fairness

- Mitigating Bias: AI algorithms can unintentionally reinforce existing social, racial, or gender biases found in training data, leading to unfair treatment of certain students.

- Diverse Testing: Ensuring diverse depiction during AI system testing helps uncover and address potential biases.

3. Openness and Explainability

- Black box Problem: Many AI models operate as “black boxes,” making decisions that are arduous to interpret or explain to educators, students, and parents.

- Stakeholder Awareness: Students, educators, and guardians should understand how AI-based decisions are made and what data is being used.

4. Accountability

- Clear Obligation: When AI systems make educational recommendations or automate decisions, who is accountable when errors occur?

- Oversight: Human-in-the-loop oversight is crucial for high-stakes educational decisions.

5. Equity of Access

- Digital divide: Not all students or schools have equal access to high-quality AI-driven tools or the internet, possibly widening achievement gaps.

- Inclusive Design: Ethical AI must prioritize inclusivity and accessibility across socioeconomic, linguistic, and physical abilities.

Benefits of Ethical AI in Education

despite these challenges, upholding ethical considerations in AI-driven learning can empower education systems and students alike.When responsibly designed, AI tools can:

- Democratize access to quality education

- Enable personalized, student-centered learning

- Provide real-time feedback, supporting at-risk learners

- Streamline administrative processes, freeing teacher time for instruction

Focusing on responsible practices helps maximize these benefits while minimizing unintended negative consequences.

Practical Tips for Navigating Ethical Challenges in AI-Driven Learning

Here are actionable, best-practice recommendations for educators, developers, and policymakers:

- Engage Stakeholders Early: Involve educators, students, parents, and technologists in the design and evaluation of AI systems to reflect real-world needs and concerns.

- Adopt “Privacy By Design” Principles: Build privacy features into AI systems from the outset, not as an afterthought.

- Regularly Audit and Test for Bias: Conduct frequent algorithm audits to identify and address inequalities in AI-based educational outcomes.

- Invest in Transparency Tools: Use explainable AI (XAI) methods to make system decisions understandable for all users.

- Promote Digital Literacy: Educate students and teachers about how AI works to empower them as learned users.

- Establish Clear Accountability Structures: Identify who is responsible for decisions made or supported by AI and ensure robust grievance procedures exist.

- Ensure Global Accessibility: Design with inclusivity in mind, making AI tools usable by students with disabilities or from underserved backgrounds.

Case studies: Ethical AI in Educational Practice

To illustrate how these ethical considerations play out in practice, let’s examine a few real-world scenarios:

Case Study 1: Mitigating Bias in Automated Grading

A university piloted an AI system to streamline essay grading. Initial tests found systematic discrepancies in scores for essays written by non-native English speakers. By introducing diverse language samples in the algorithm’s training data and consulting linguistic experts, the university reduced grading bias, improving fairness and trust in the tool.

Case Study 2: Transparency in Adaptive Learning Platforms

An education technology provider adopted explainable AI features, showing teachers and learners how adaptive learning recommendations were generated. This built trust and empowered educators to customize AI suggestions to fit classroom context.

Case Study 3: safeguarding Student Data

A K-12 school district introduced an AI-powered monitoring tool to flag students at academic risk. They secured strong data governance policies, obtained parental consent, and set up an independent ethics committee to continuously monitor ethical impacts, exemplifying best practices in data privacy and oversight.

First-Hand Experiences: Voices from the Field

Teachers and students are at the forefront of implementing and experiencing AI in the classroom. Here are a few perspectives:

- Teacher’s viewpoint: “AI-enabled tutoring has helped me identify students who are struggling earlier, but it’s crucial that I retain the final say in interventions. Automated systems must remain tools, not replacements for professional judgment.”

- Student Viewpoint: “I appreciate when learning platforms explain why I’m getting recommended certain lessons. It makes it feel less random, and I understand how to improve.”

- Developer’s Insight: “Building AI for education means constant vigilance against bias.We work closely with teachers from different backgrounds to make sure our tools help all students, not just a select few.”

Conclusion: Fostering Responsible AI Practices in Education

The ethical considerations in AI-driven learning are not just academic—they are central to building trustworthy, equitable, and effective educational technologies. by proactively addressing issues like privacy, transparency, bias, and accessibility, schools and developers can ensure that AI continues to drive positive change in education, supporting every learner’s journey.

As AI-driven learning tools become increasingly embedded in classrooms worldwide,a shared commitment to responsible,student-centered innovation must guide every decision. Through collaboration,vigilance,and ethical design,the promise of AI in education can be fully realized—delivering smarter,fairer,and more inclusive educational experiences for all.