Ethical Considerations in AI-Driven Learning: Navigating Trust, Bias, and Student Privacy

Artificial Intelligence (AI) has rapidly transformed the educational landscape, empowering schools, educators, and learners to achieve greater outcomes through personalized and data-driven experiences. Though, as AI-driven learning systems become more commonplace in classrooms and online platforms, it’s essential to consider the ethical implications involved. Trust, bias, and student privacy are at the heart of these conversations.

Introduction: Why Ethics Matter in AI-powered Education

AI-powered learning platforms can revolutionize education by providing adaptive feedback, personalized pathways, and predictive analytics that support both teachers and students. But with grate technological power comes great duty. Education isn’t just about knowledge transfer— it’s about nurturing trust, equity, and respect for all learners. Ethical considerations in AI-driven learning help ensure that technology enhances learning rather than diminishes fairness or violates privacy.

The Benefits of AI-Driven Learning in Education

Before diving into the ethical concerns, let’s acknowledge the notable advantages of AI in the educational sector:

- Personalized learning: AI adapts content to meet individual student needs and learning paces.

- Efficiency: Automates administrative tasks, freeing educators to focus on instruction.

- Data-Driven Insights: Identifies student strengths and weaknesses for targeted interventions.

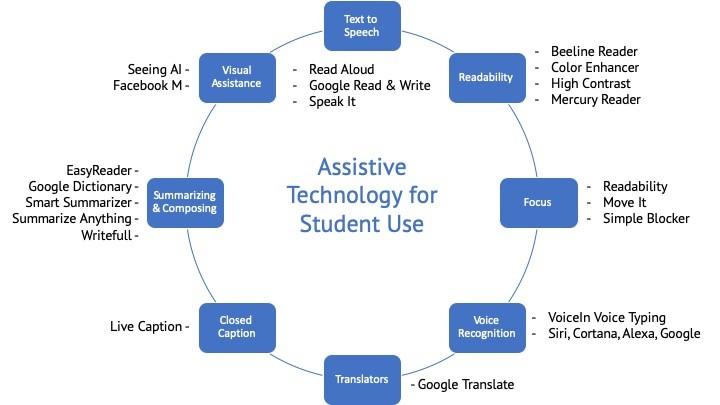

- Accessibility: AI-powered tools offer support for diverse learning needs and disabilities.

However, to fully realize these advantages, we must confront the ethical issues embedded in AI-powered education.

Trust: Building Confidence in AI-Driven Learning Systems

For AI to be effective in education, students, teachers, and parents must trust that these systems will act in the learners’ best interests. Several factors influence trust in AI-driven learning platforms:

- Openness: How the AI system makes recommendations or grading decisions.

- Accountability: Clear lines of responsibility when mistakes or biases occur.

- Reliability: Consistent, accurate performance without constant errors or “black box” outcomes.

Practical Tips for Building Trust

- explainability: choose or design AI systems that provide understandable, human-readable reasons for their actions.

- Human Oversight: Ensure there’s always a teacher or administrator who can verify or override AI decisions.

- Continuous Training: Educate students, parents, and educators about how AI works and its limitations.

Bias in AI-Driven Learning

AI algorithms depend on data— and if that data is biased, so are the outcomes. Algorithmic bias in education can amplify existing social inequalities, misinterpret student capabilities, or unfairly categorize learners.

Common Sources of Bias in Educational AI

- Ancient Data: Past performance data might reflect systemic inequities.

- Incomplete Data: AI may lack sufficient data from minority or underrepresented student groups.

- Design Choices: The algorithm’s creators might inadvertently embed their assumptions or prejudices.

Addressing Bias: Best Practices

- diverse Data Sets: Train AI models using representative and inclusive student populations.

- Regular Auditing: Regularly review AI outcomes for patterns of socioeconomic, racial, or gender disparities.

- Inclusive Design Teams: Develop systems with input from diverse stakeholders.

student Privacy: Safeguarding Sensitive Data

One of the most pressing ethical concerns in AI-powered education relates to privacy. AI tools frequently enough collect, analyze, and store vast amounts of sensitive student data, raising critical questions:

- Who owns student data?

- What details is collected, and why?

- How secure is the data against breaches or unauthorized access?

Protecting Student Privacy: Key Approaches

- Data minimization: Collect only what’s strictly necessary for educational purposes.

- Secure Storage: Use industry-standard encryption and robust access controls.

- Clear Policies: Draft and share obvious privacy agreements with students and families.

- Rights & Consent: Give students and guardians control over their data and obtain informed consent for its use.

Case Studies: Ethical Dilemmas in Practice

Case Study 1: Predictive Analytics and Grade Prediction

A school district deployed an AI system to predict student success and recommend interventions.While some students benefited from early support, research found that lower-income and minority students were flagged as “at risk” more frequently— not always as of their performance, but due to biases in historical data.

Lesson: Regular algorithm audits and communicating the potential for bias are critical.

Case Study 2: Data Privacy and Parental Rights

An edtech startup offering personalized reading tools collected detailed learning data from children without adequately informing parents. When a data breach occurred, thousands of students’ data were exposed.

Lesson: Always prioritize user consent, data minimization, and strong cybersecurity measures.

First-Hand Experience: Educator Viewpoints on AI Ethics

“AI allows me to better identify students who need support, but I always double-check what the system recommends. There’s a risk of missing the bigger picture if we trust AI blindly.”

– High School Teacher, United States

Teachers emphasize that AI is a tool, not a replacement for professional judgment or empathy. Ethical training and continuous dialog are essential to keeping human values at the center of AI-powered education.

Balancing Innovation and Responsibility

Education leaders, developers, and policymakers must work collaboratively to harness the benefits of AI in educational technology while safeguarding ethics. Some strategies to balance innovation with responsibility include:

- Ethical Frameworks: Adopt or adapt frameworks (e.g., ISTE AI standards, UNESCO guidelines) to guide responsible AI deployment.

- Stakeholder Engagement: involve teachers, parents, students, and technologists in the conversation from the start.

- Ongoing evaluation: Establish feedback loops to continually assess the ethical impact of AI systems.

Conclusion: Moving Toward Ethical AI in education

AI-driven learning offers transformative potential, but realizing this requires a steadfast commitment to trust, fairness, and privacy. Every stakeholder— from developers and district leaders to teachers and students— plays a role in shaping safe, equitable, and effective learning environments.

By centering ethical considerations in AI-powered education, we enhance not just learning outcomes, but also the well-being and agency of students everywhere. As technology evolves, so must our commitment to ethical, transparent, and responsible AI integration in education for present and future generations.