Ethical Considerations in AI-Driven Learning: Safeguarding Privacy, Fairness, and Trust in Education

Artificial Intelligence (AI) has rapidly transformed the landscape of modern education, offering personalized learning experiences, adaptive assessments, and innovative teaching tools. Though, integrating AI into education also raises significant ethical questions—especially concerning student privacy, fairness, and trust. If not carefully managed, these advancements can inadvertently compromise student data, perpetuate bias, and erode confidence in the educational system. In this article, we explore the ethical considerations in AI-driven learning and provide practical guidance for building secure, equitable, and trustworthy AI-enhanced educational environments.

AI in Education: Potential & Ethical Challenges

The application of AI in education ranges from intelligent tutoring systems and automated grading to school management and curriculum customization. These innovations have the potential to:

- Enhance learning outcomes through personalization;

- Support teachers with data-driven insights and administrative automation;

- enable scalable, inclusive education for diverse learners.

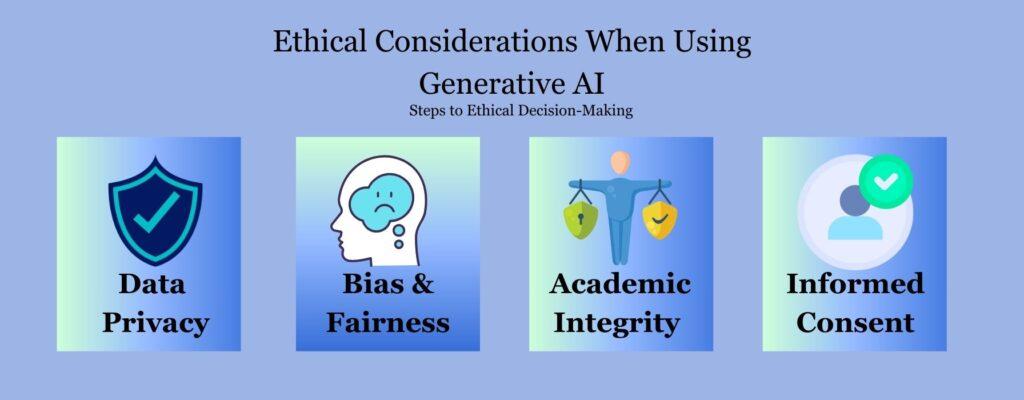

Yet, alongside these opportunities, AI implementation in schools introduces unique ethical challenges:

- Data privacy risks due to extensive collection and analysis of student details;

- Bias and unfairness stemming from flawed algorithms or unrepresentative training data;

- Trust issues if students, parents, and educators perceive AI systems as opaque or unreliable.

Safeguarding Privacy: Protecting Student Data

AI and Student Privacy Concerns

AI-driven learning platforms often rely on gathering vast amounts of data, including personal identifiers, academic records, behavioral analytics, and even emotion recognition. Without strict safeguards,such data can:

- Risk exposure to unauthorized parties;

- Be used for unintended or unethical purposes;

- Jeopardize student autonomy and confidentiality.

Best Practices for Privacy Protection

- Data Minimization: Collect only what is absolutely necessary for educational purposes.

- Clear Policies: Clearly communicate what data is collected, how it’s used, and who can access it.

- Security Measures: Implement robust encryption, access controls, and regular audits.

- Consent: Ensure informed parental and student consent for data collection and AI use.

Tip: Schools adopting AI technologies should work closely with IT and legal experts to ensure compliance with privacy laws such as FERPA (Family Educational Rights and Privacy Act) and GDPR (General Data Protection regulation).

Ensuring Fairness: Addressing Algorithmic Bias in AI-Driven Learning

What is Algorithmic Bias?

Algorithmic bias occurs when AI systems produce systematically prejudiced outcomes due to biases in data or model design. In an educational setting, this can mean:

- Favoring students from certain backgrounds over others;

- Reinforcing stereotypes or historical inequalities;

- limiting access to opportunities for marginalized groups.

How to Foster Fair and Equitable AI

- Diverse Datasets: Use training data that reflects the full spectrum of student backgrounds and learning styles.

- Ongoing Audits: Regularly review and test AI outcomes for signs of bias.

- Inclusive design: Involve a broad range of stakeholders—including educators, students, and advocacy groups—in system growth.

- Clear Appeal Mechanisms: Allow users to challenge or review AI-generated decisions affecting academic paths.

Case Study: A university using an AI-driven admissions tool discovered higher rejection rates for applicants from certain socioeconomic communities. After a thorough audit,the model was re-trained with more representative data,resulting in fairer,more balanced admissions outcomes.

Building Trust: Fostering Transparency and Accountability in AI-Driven Learning

Why Trust Matters in Educational AI

Students, guardians, and teachers must trust that AI-powered educational tools will support—not hinder—academic growth. Trust is built through:

- Transparency: Clear explanations of how AI systems work and why decisions are made.

- Human Oversight: Maintaining a human-in-the-loop approach for significant assessments and recommendations.

- Ethical governance: Establishing institutional policies for ethical AI sourcing, deployment, and review.

Actionable Steps for schools and EdTech Providers

- Publish guides and FAQs explaining AI algorithms and outcomes;

- Appoint an ethics officer or committee to oversee AI-driven learning initiatives;

- solicit regular feedback from students and parents to guide further AI improvements.

First-Hand Experience: “After our school adopted adaptive learning software, tech staff hosted workshops to demystify how student recommendations were generated.This transparency boosted parental confidence and allowed us to address their concerns more effectively.”

Benefits of Responsible AI in Education

Balancing ethical concerns with innovation unlocks the true potential of AI-driven learning. When privacy, fairness, and trust are protected, students and educators can enjoy:

- Personalized, adaptive instruction tailored to individual strengths and challenges;

- Early identification of learning gaps, enabling timely, targeted interventions;

- Reduced administrative burdens for faculty, freeing time for mentorship and creativity;

- Greater equity in academic opportunities for underserved or overlooked populations.

Pro Tip: Assess your school’s readiness for AI integration by conducting ethical impact assessments and encouraging regular dialog between teachers, students, and technology vendors.

Practical Tips for Ethical AI Use in Education

Checklist for Educators and Institutions

- Audit regularly: Schedule periodic reviews of AI usage,outcomes,and data flows.

- Educate stakeholders: Train teachers, students, and administrators on responsible use and limitations of AI tools.

- Collaborate: Join forces with other schools and experts to share best practices and resources.

- Update policies: Adapt school policies as AI technologies and ethical standards evolve.

- Prioritize student wellbeing: Always keep the interests and needs of learners at the center of AI initiatives.

Conclusion

As AI-driven learning continues to reshape education worldwide,the ethical considerations of privacy,fairness,and trust must remain at the forefront. By proactively addressing data protection, combating algorithmic bias, and fostering transparent systems, educational institutions and technology providers can build learning environments where all students thrive. Responsible, ethical AI not only safeguards young minds but also fuels innovation, inclusiveness, and confidence in the future of education.

Ready to explore more? Stay informed on the latest developments in ethical AI in education and ensure your institution leads the way in building safe, fair, and trusted digital classrooms.