Ethical Considerations of AI in Education: key Challenges and Solutions for Responsible Integration

Artificial Intelligence (AI) is rapidly transforming the education sector—reshaping everything from personalized learning to assessment automation and classroom management. While the adoption of AI in education offers promising advantages, it also introduces new ethical challenges that educators, policymakers, and technology providers must address.In this article, we’ll delve into the core ethical considerations of AI in education, explore the key challenges, and propose actionable solutions for responsible AI integration to ensure that technology serves all learners fairly and effectively.

Benefits of AI in Education: A Snapshot

- Personalized Learning: Tailored lesson plans and adaptive feedback help cater to individual student needs.

- Automation of Administrative Tasks: AI systems streamline grading, attendance, and scheduling, freeing educators to focus on teaching.

- Data-Driven Insights: AI analytics support early intervention by identifying students who may need additional help.

- Inclusive Education: Speech recognition and language translation tools break language barriers and support students with disabilities.

Despite these benefits, ethical use of AI in education is paramount to protect student rights, prevent discrimination, and foster trust in technology.

Key ethical Challenges of AI in Education

1. Data Privacy and Security

One of the most pressing ethical considerations centers on student data privacy. AI-powered educational technologies collect vast amounts of personal data,raising questions about consent,data ownership,and the risk of data breaches.

- transparency: Parents, students, and educators must know what data is being collected and how it is used.

- Security: Institutions must implement robust data protection measures to safeguard sensitive details.

- compliant Practices: Providers must adhere to regulations such as FERPA, GDPR, and local privacy laws.

2. Algorithmic Bias and Discrimination

AI systems learn from existing data, which can reflect societal biases. Consequently, ther’s a risk that AI algorithms may perpetuate inequalities or reinforce stereotypes in educational settings.

- Assessment Fairness: Biased data can lead to unfair evaluation and tracking of students, particularly those from marginalized backgrounds.

- Equity in Opportunities: Unchecked algorithms may limit access to gifted programs or additional support.

- Continuous Monitoring: Bias can emerge over time as AI systems evolve, requiring ongoing scrutiny and correction.

3. Transparency and Explainability

The “black box” nature of many AI algorithms makes it arduous for educators and students to understand how decisions are made. This lack of transparency can erode trust and hamper informed decision-making.

- Explainable AI: Stakeholders should be able to question and comprehend AI-based outcomes, especially around assessment and placement.

- Accountability: Clear guidelines must define who is responsible when AI makes erroneous or harmful decisions.

4. Informed Consent and Autonomy

Students and families must be empowered through informed consent—knowing both the potential benefits and risks of AI-powered educational technologies.

- Clarity: Consent forms must be simple, clear, and accessible to all stakeholders.

- Opt-Out Options: Students should have the choice to decline participation without academic penalty.

5. Teacher and Student Roles Redefined

As AI systems take on more educational tasks, there’s uncertainty about the evolving roles of educators and students. Striking the right human-tech balance is crucial for student engagement and development.

- Human oversight: AI should support teachers, not replace them.

- Skill Development: Teachers need support and training to integrate AI responsibly and ethically.

Practical Solutions for Responsible AI Integration in Education

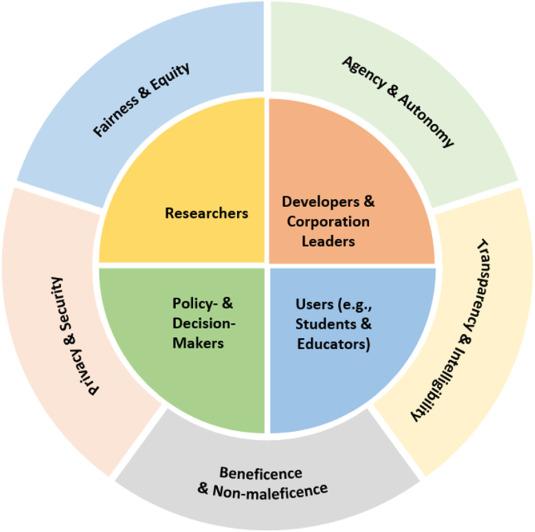

1. Establishing Robust Ethical Frameworks

Educational institutions should adopt comprehensive ethical guidelines specific to AI in education. These frameworks should address privacy, bias, transparency, and accountability.

2. Ongoing Professional Development for Educators

- Train educators on the ethical implications of AI, helping them recognize and mitigate potential issues.

- Promote digital literacy among teachers and administrators.

3. Promoting algorithmic Transparency and Auditability

Require AI vendors to provide accessible documentation of their algorithms’ decision-making processes. Regular auditing can help identify and correct biases or errors.

4. Engaging Stakeholders in the Decision-Making Process

- Include students, parents, teachers, and community members when selecting and evaluating educational AI tools.

- Foster an habitat of continued feedback and iterative improvement.

5. Building Privacy by Design

Integrate privacy safeguards into AI systems from the outset—minimizing data collection, enforcing strict access controls, and anonymizing sensitive student information.

6. Ensuring Equity and Accessibility

Monitor AI outcomes to ensure all students, nonetheless of background or ability, benefit equally. Provide alternative non-AI options for those who opt out or face access barriers.

Case Studies: AI in Education and Ethical Impact

Case Study 1: Reducing Bias in Automated Essay Scoring

A major school district piloted AI-driven essay grading. Initial reviews uncovered that the system scored essays written by students with non-native English backgrounds significantly lower. Through stakeholder collaboration, the district and AI provider retrained the algorithm, incorporating broader linguistic diversity. The result: a measurable decrease in biased scoring and greater trust in automated assessment.

Case Study 2: Data Privacy in Adaptive Learning Platforms

A leading adaptive learning company adopted a “privacy by design” approach,limiting data collection to only what was strictly necessary and encrypting all personal data. They provided an open dashboard for students and parents to track and manage the data held on them,enabling true consent and transparency.

Practical Tips for Teachers and schools

- Read the fine Print: Review privacy policies and terms of all educational AI tools before classroom adoption.

- Start Small: Pilot AI tools with a small group, gather feedback, and scale up only if ethical standards are met.

- Foster Digital Citizenship: Teach students about their digital rights, privacy, and the potential risks and benefits of AI technologies.

- Collaborate: Work closely with IT, legal, and administrative teams to ensure compliance with privacy and data protection laws.

Conclusion: Towards an Ethical and Inclusive AI-Powered education

The ethical considerations of AI in education are multifaceted and ever-evolving. While AI holds the promise of more personalized, effective, and inclusive learning experiences, it can also amplify risks around privacy, bias, transparency, and autonomy if left unchecked.By embracing robust ethical frameworks, fostering collaboration among stakeholders, and placing student welfare at the heart of every decision, educators and technology providers can harness the power of artificial intelligence responsibly.

As AI becomes more deeply integrated into classrooms worldwide, prioritizing ethics is not just recommended—it’s essential. Only through responsible integration can we ensure that today’s learners are empowered, protected, and prepared for the future.